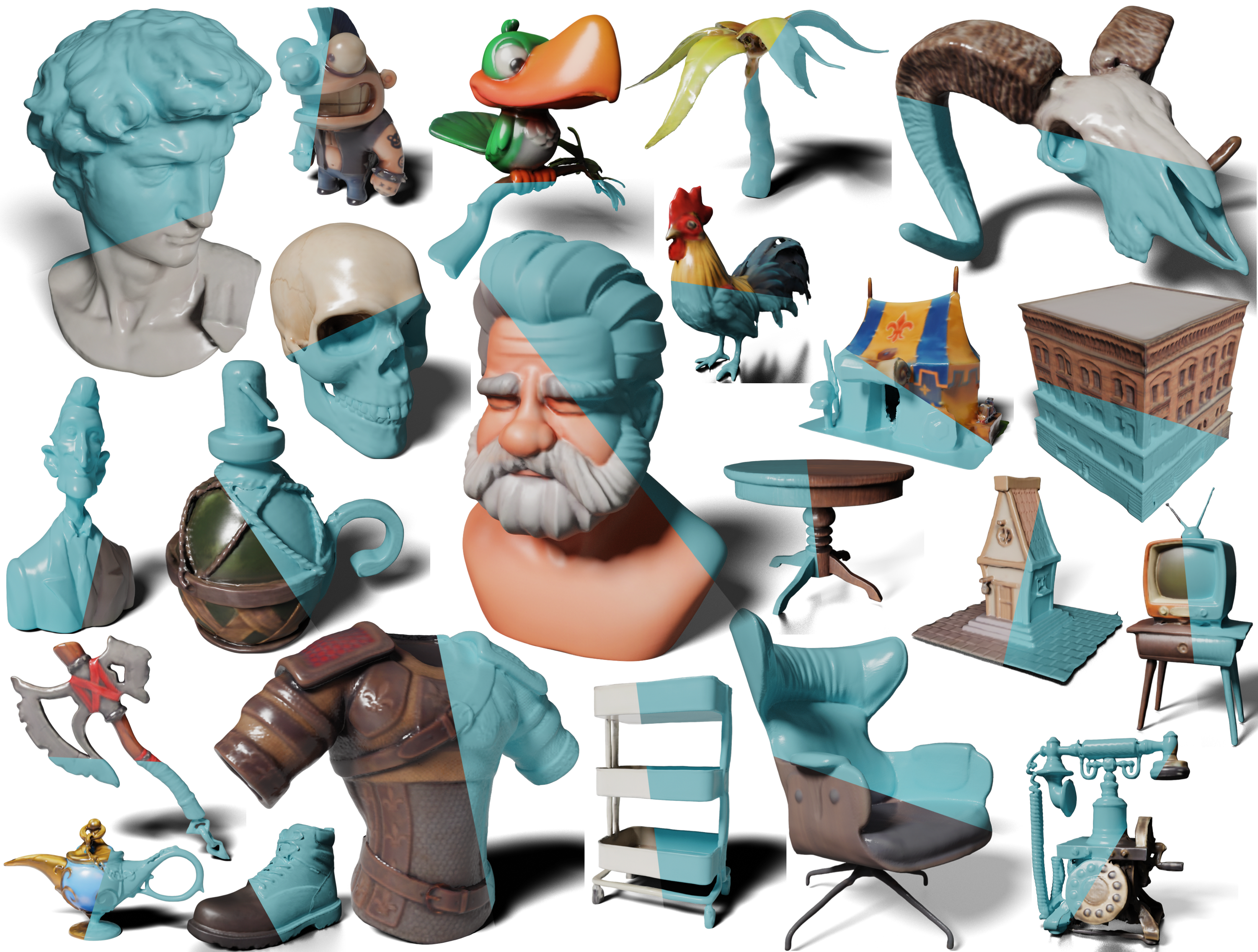

A sparse-view reconstruction model that explicitly leverages 3D native structure, input guidance, and training supervision.

I earned my Ph.D. degree in Statistics at UCLA in 2025. Previously, I received dual bachelor degrees in Computer Engineering from University of Illinois at Urbana-Champaign and Zhejiang University.

My research interests are focused on the fields of computer vision, robotics, and cognition. I actively engaged in pushing the boundaries of generalizable 3D vision: 1) object understanding, 2) 3D reconstruction and generation, etc.

A sparse-view reconstruction model that explicitly leverages 3D native structure, input guidance, and training supervision.

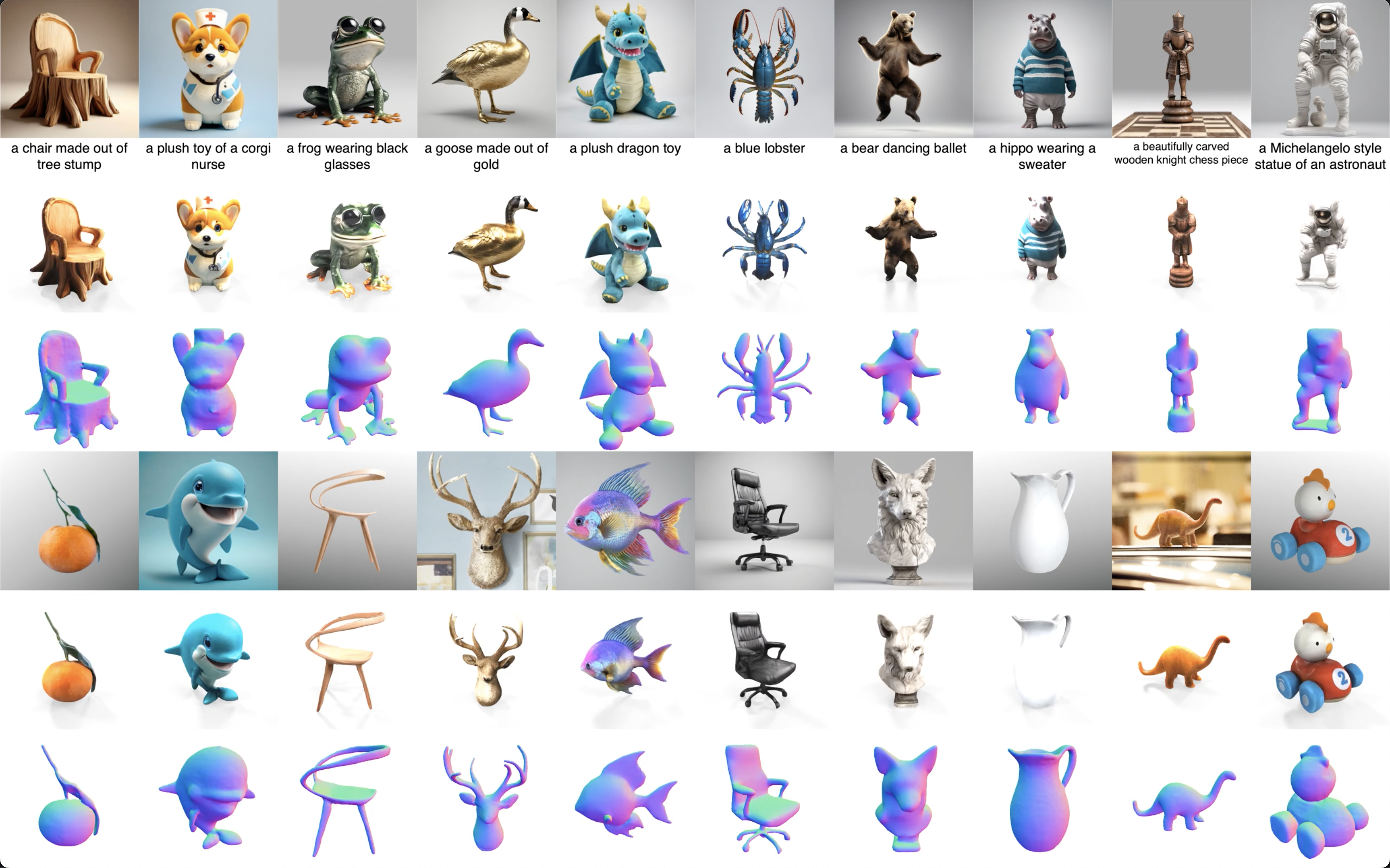

Given sparse unposed views, we leverage rich priors embedded in multiview diffusion models to predict their poses and reconstruct the 3D shape.

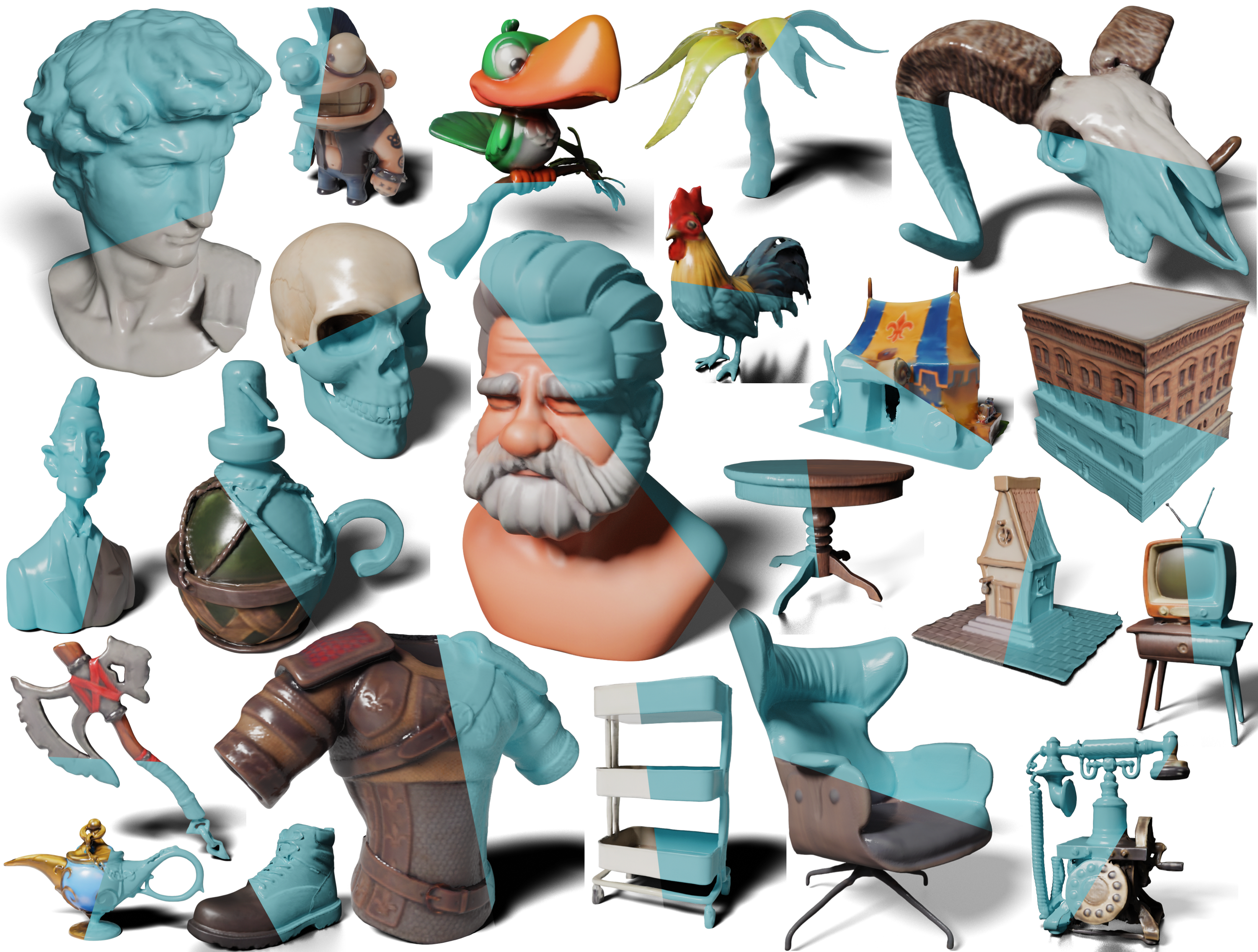

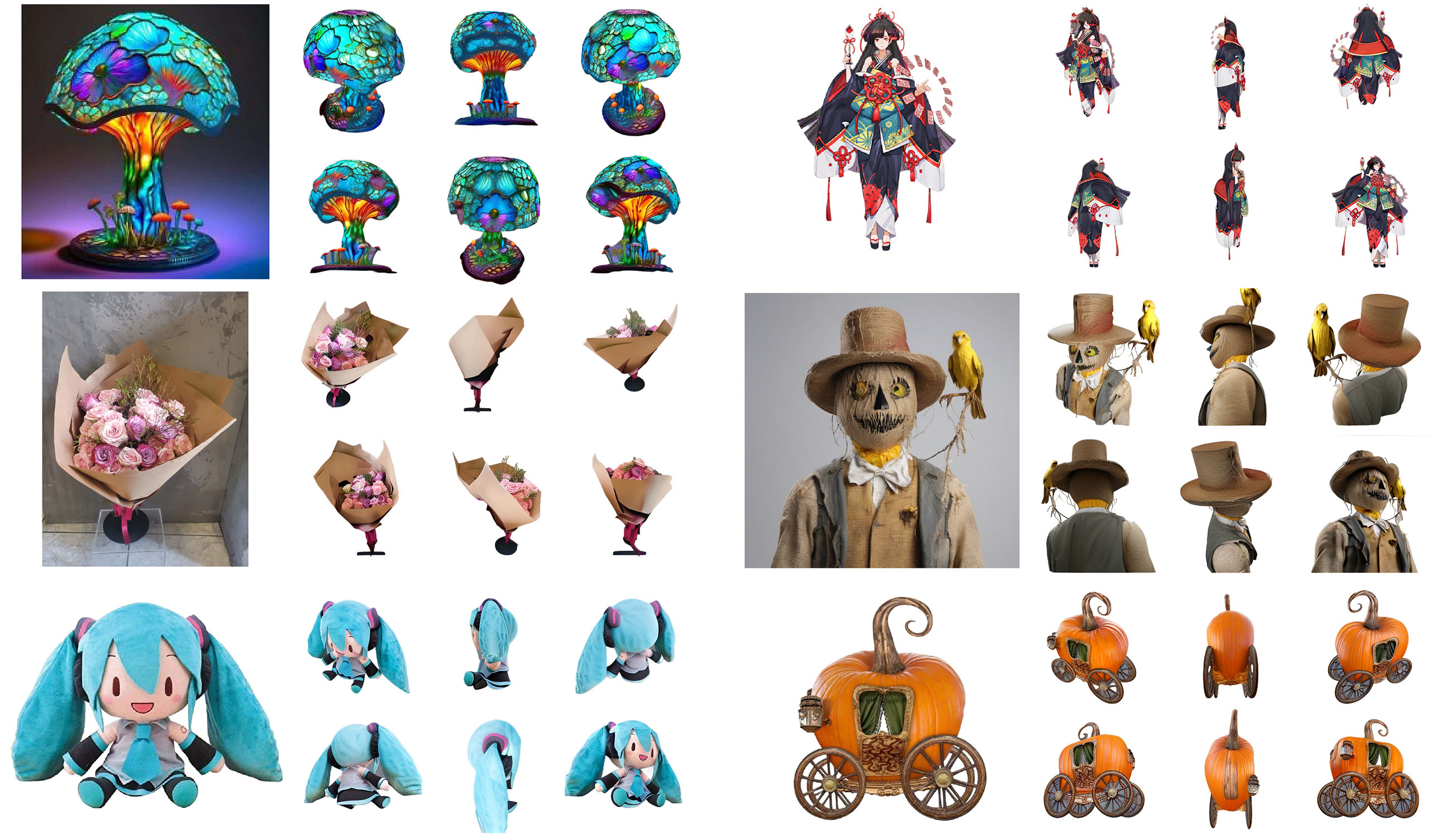

We propose a new pipeline in the One-2-3-45's paradigm: More consistent multi-view generation (Zero123++) and better reconstruction (multi-view conditioned 3D native diffusion models).

We improve consistency and image conditioning in single-image multi-view generation.

We rethink how to leverage 2D diffusion models for 3D AIGC and introduce a novel forward-only paradigm that avoids the time-consuming optimization.

We learn parts on articulated objects across categories.

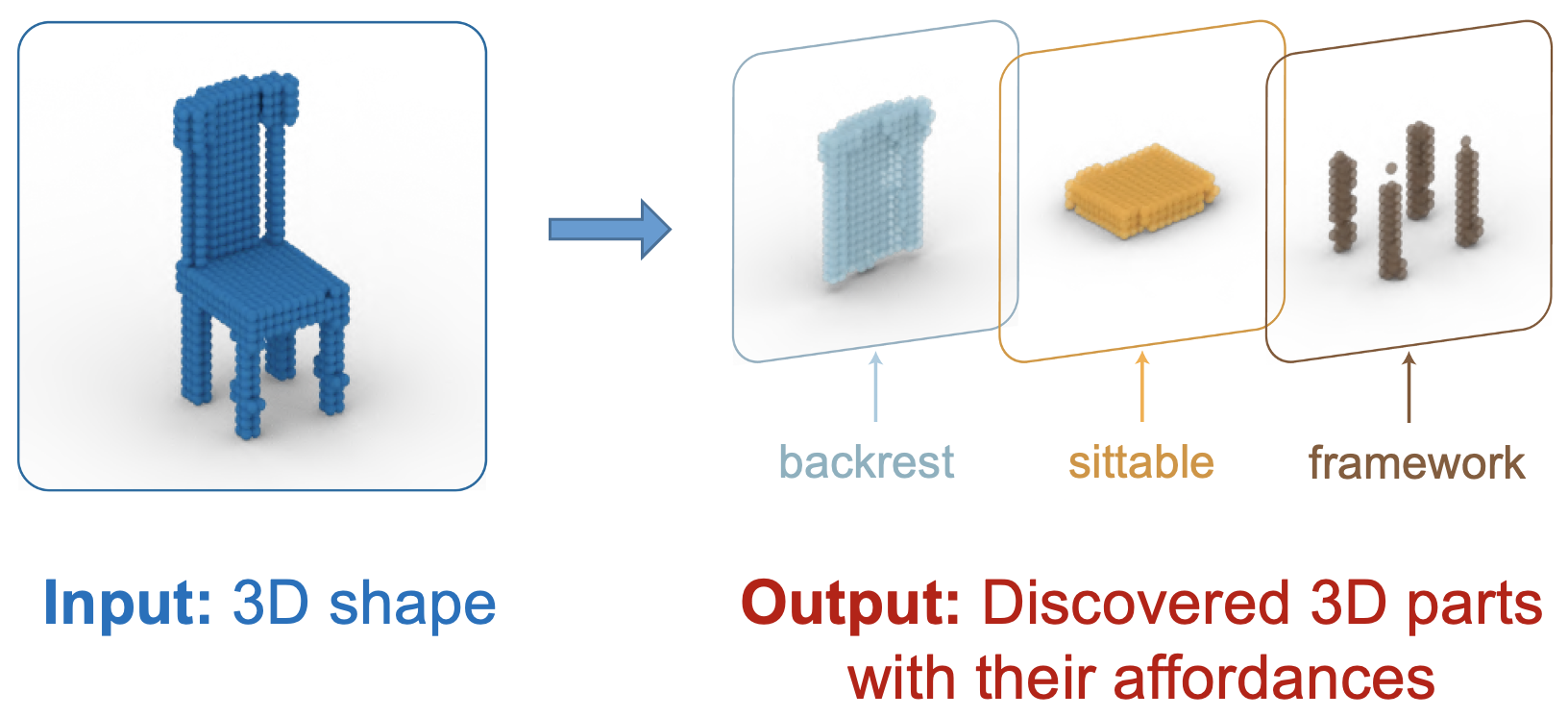

We discover part affordances on 3D objects across categories under weak supervision.